I’ve been running FreeNAS for a file server for some years now, and really like the capabilities of ZFS on that system. ZFS on Linux now seems to be pretty mature, and there’s documentation out there (e.g., https://github.com/zfsonlinux/pkg-zfs/wiki/HOWTO-install-EL7-(CentOS-RHEL)-to-a-Native-ZFS-Root-Filesystem) on installing CentOS 7 on a ZFS root filesystem. Has anyone played with Neth in this environment?

OK, I have Neth running now on ZFS. It’s too much for me to write up at the moment, but in short, I started with a minimal CentOS 7 installation, moved that onto ZFS following (mostly) these instructions (though updated for the current CentOS using the information from the ZFS on Linux wiki), then installed Neth following the documentation.

That got Neth running on a single-disk pool. My goal would be to (1) run it on a multi-disk pool so I’d have redundancy, and (2) use large (>2 TiB) disks, which appear to require a GPT partition table. I’d also need either of the disks to be bootable–otherwise, if the wrong disk fails, the system won’t boot, which kind of defeats the purpose. This blog post has the instructions I followed to GPT partition two more virtual disks, both larger than the first. Added partition two of both of those virtual disks to the pool with zpool attach (so, for the time being, it’s a three-way mirror), and installed grub to the boot block of each with grub2-install /dev/sdb (and /dev/sdc). The pool is now resilvering (resyncing), and in the meantime, I’m configuring the test server, installing stuff from the software center, etc.

For some reason, the instructions I followed do not create the pool with the autoexpand property turned on. You’d want that. zpool set autoexpand=on rpool resolves this.

Once the resilver completes, I expect I should be able to zpool detach the first virtual disk, and the pool will then become a two-way mirror. It should also automatically expand to the size of the new virtual disks.

Edit: Well, that mostly worked. I did zpool detach rpool scsi-0QEMU_QEMU_HARDDISK_drive-scsi0-part1, and in a few seconds, I was returned to the command prompt. zpool status now showed a two-way mirror, and zpool list showed that it had grown to the size of the new (larger) virtual disks. Getting it to boot took a little bit of doing, though; the system didn’t initially see either of the two new virtual disks as bootable. Once I removed the old one, and reassigned the SCSI IDs of the remaining disks, the system booted. I’m thinking this wouldn’t be an issue with actual, physical disks.

Something I’m noticing, and don’t have a ready explanation for: any time I make a change to the pool, rebooting fails and drops me to a dracut> prompt. The error says that it’s unable to import the pool. At the dracut> prompt, I run zpool import -f rpool, and it imports without issues. I then reboot, and it’s fine.

Edit 2: I’ve realized that when I partitioned those two virtual disks, I didn’t create swap partitions. Probably should have done that.

Hope this is useful to someone else–I’m mostly posting it so I’ll have the links handy for my own sake in the future, but it should be enough for others to get started with.

I’ve now tried this on “bare metal” rather than a VM, and here is much more of a step-by-step walkthrough. Very little (if any) of this is original to me; my sources are linked above.

As a big-picture overview, this procedure will install CentOS to one partition on a disk, create a ZFS pool on another partition, copy the CentOS installation to that ZFS pool, and configure Grub to boot from that pool.

Edit: It should be obvious, but don’t do this on a production system, or one you expect to immediately put into production. It’s working for me as far as I can see, but it will need plenty of testing to confirm that it’s going to be stable and not interfere with anything that Neth does with the system.

You’ll start by doing a minimal install of CentOS 7.4. In the CentOS installer, set up custom partitioning. Tell it to use standard partitioning, not LVM. The first partition will be xfs, with a mountpoint of /mnt/for-zfs. Size it as the total size of your disk minus about 15 GB. The second partition will be ext4, with a mountpoint of /. Size it to take the remaining space. The installer will warn you about the lack of a swap partition, but this isn’t a problem—we’ll add that later. The remainder of the installer settings can be left at defaults, and/or set to your preference. Complete the installation and reboot.

On reboot, you’ll find that your network isn’t active, because CentOS inexplicably doesn’t activate network interfaces by default. To activate your network, you’ll first need to determine the name of your network interface. Do this by running nmcli d. Having determined the interface name (for example, enp0s25), bring it up by running ifup enp0s25.

Now that the network is active, determine your IP address by running ip addr. Make a note of this; you’ll need it later. You’ll also be able to use yum to install your favorite text editor. Install rsync as well; we’ll use it later. yum install nano rsync.

Now you can configure your network interface to automatically start on boot. Edit /etc/sysconfig/network-scripts/ifcfg-enp0s25 (replacing enp0s25 with your interface name), find the line that says “ONBOOT=no”, and change that to “ONBOOT=yes”. The network interface will now start up automatically on boot.

You’ll now need to edit your SSH configuration to allow root logins with password. Edit /etc/ssh/sshd_config. On line 38, uncomment the line that says PermitRootLogin yes. Then restart the SSH service: service sshd restart. You should now be able to connect to this system using SSH, at the IP address you noted above.

Run yum update to bring your installed packages up to date.

Unmount the for-zfs partition: umount /mnt/for-zfs. Edit /etc/fstab to remove the listing for that partition.

Install the ZFSonLinux repository: yum install http://download.zfsonlinux.org/epel/zfs-release.el7_4.noarch.rpm.

Edit /etc/yum.repos.d/zfs.repo. In the [zfs] block, set enabled to 0. In the [zfs-kmod] block, set enabled to 1.

Then install ZFS itself: yum install zfs zfs-dracut.

Create /etc/hostid: dd if=/dev/urandom of=/etc/hostid bs=4 count=1

Activate the ZFS kernel module: modprobe zfs.

Check your disk IDs: ls /dev/disk/by-id/* -la

Now it’s time to create the pool. This command will specify a number of ZFS feature flags, to ensure that Grub will be able to boot from the pool. Note that the page I used as my main source for these instructions includes the copies=2 option. I’m not including it in this command, as I’ll be creating a two-disk mirror shortly. zpool create -f -d -o feature@async_destroy=enabled -o feature@empty_bpobj=enabled -o feature@lz4_compress=enabled -o ashift=12 -O compression=lz4 -O acltype=posixacl -O xattr=sa -O utf8only=on -O atime=off -O relatime=on rpool /dev/disk/by-id/ata-Hitachi_HDS723020BLA642_MN1210F338JYMD-part1

Turn on the autoexpand property, so the pool will grow as disks are added or replaced: zpool set autoexpand=on rpool

You’ll now want to create the root filesystem: zfs create rpool/ROOT.

Create a tmp directory, and mount the root directory there:

mkdir /mnt/tmp mount --bind / /mnt/tmp

Then use rsync to copy the data: rsync -avPX /mnt/tmp/. /rpool/ROOT/.

Once that finishes, edit /etc/fstab to remove the root partition, and umount /mnt/tmp.

You’ll next create some symbolic links that Grub needs: cd /dev/; ln -s /dev/disk/by-id/* . -i.

Mount proc, sys, and dev into the ZFS pool: for dir in proc sys dev;do mount --bind /$dir /rpool/ROOT/$dir;done.

Now change root into the new root filesystem: chroot /rpool/ROOT/.

Create the Grub configuration: grub2-mkconfig -o /boot/grub2/grub.cfg.

In the output of grub2-mkconfig, note the complete version number of the first linux/initrd images.

Remove the ZFS cache: rm /etc/zfs/zpool.cache

Create the initramfs files: dracut -f -v /boot/initramfs-$(uname -r).img $(uname -r); dracut -f -v /boot/initramfs-3.10.0-693.5.2.el7.x86_64.img 3.10.0-693.5.2.el7.x86_64 In the second command here, if the version number does not match the version number you noted above, change the version number in the command (both places) to match.

Install Grub on your disk: grub2-install --boot-directory=/boot /dev/sda. If that completes with no errors, exit from the new root.

Now unmount proc, sys, and dev from the new root: for dir in proc sys dev;do umount /rpool/ROOT/$dir;done. Reboot the system and cross your fingers.

If you’re lucky, your system will reboot without issue.

Login via SSH again. It’s time to delete the original root partition. Run fdisk /dev/sda. Enter p (to print the partition table), d (to delete a partition), 2 (partition #2), p (to show the table again, making sure it still has the large partition #1), w (to write the partition table and quit).

Reboot the system again.

Log in once more. Run zpool status to confirm that your pool is in good shape.

Now you can begin to install Neth: yum install http://mirror.nethserver.org/nethserver/nethserver-release-7.rpm. Then begin the installation: nethserver-install.

When the installation completes, you’ll have Nethserver installed and running on a single-disk ZFS pool. If that’s your objective, you can stop here, though you’d probably be better off adding a swap partition to your disk in the space previously occupied by your old root partition. The next post will discuss adding disks to create a mirrored volume.

In the last post, I walked through getting Neth running on a single-disk ZFS pool, created on one 2 TB disk. But I really wanted it on a ZFS mirror, and on larger disks. So I installed a pair of 6 TB disks. They’ll need to be partitioned to reserve space for Grub (and also to create swap space), and since they’re over 2 TB, they’ll need GPT partitions.

For reasons unknown, the new disks are /dev/sda and /dev/sdb (the original disk is /dev/sdc). We’ll use parted to set up the partitions. Start with parted /dev/sda, and then enter these commands:

mklabel gpt mkpart bpp 1MB 2MB set 1 bios_grub on mkpart swap 2MB 8GB mkpart zfs 8GB 100%

This will create the partition table, create a small (1 MB) partition, set it as a BIOS boot partition, create a second partition (from 2 MB to 8 GB on the disk) for swap, and create a third partition (from 8 GB to the end of the disk) for your ZFS pool. Then enter select /dev/sdb to switch to the other disk, and enter the same commands there. Then quit.

Now that you’ve set up the partitions, you’ll need to repeat the earlier step of creating symbolic links to the disk ID entries in /dev/: cd /dev/; ln -s /dev/disk/by-id/* . -i.

You can now install Grub to both of the new disks: grub2-install /dev/sda; grub2-install /dev/sdb.

Then, add the third partition of both of the new disks to the pool: zpool attach rpool /dev/disk/by-id/ata-Hitachi_HDS723020BLA642_MN1210F338JYMD-part1 /dev/disk/by-id/ata-WL6000GSA6457_WOL240336074-part3

zpool status will show you the pool status. Depending on how much data you’ve put onto the pool, resilvering may take a few minutes, but should not be very long.

Once resilvering has completed, power down the machine, remove the original disk, and reboot.

If your system boots, you’re in good shape. Mine didn’t boot, so I reinstalled the original disk, booted from that, repeated the grub steps above, powered down the machine again, removed the disk again, and booted again. This time, it booted right up.

Once the system will boot with the original disk disconnected, you can remove it from the pool: zpool detach rpool ata-Hitachi_HDS723020BLA642_MN1210F338JYMD-part1.

All that’s left is to set up swap on the two partitions you created: mkswap /dev/sda2; mkswap /dev/sdb2.

And then edit /etc/fstab to activate it automatically. Add the following for each swap partition: /dev/sda2 swap swap defaults 0 0

To turn on the swap without rebooting, run swapon /dev/sda2; swapon /dev/sdb2.

At this point, you’re done. You have a two-disk mirrored ZFS pool, with Neth installed. Continue to configure your system as desired.

Hi @dan,

you started with a feature request and made a howto out of it! Very nice work! Thank you for sharing.

Great effort @dan!

What might be an option for the less savvy members is using proxmox as a base and run NS on that. Proxmox supports ZFS out of the box and is very user friendly. (even for me)

I have NS running on proxmox with ZFS for some time now and I just love it.

Thanks, but a very rough howto at this point. But I figure if I’m going to ask for a feature, my chances of that feature improve the more information I provide about how to implement it. It’s unfortunate, though, that RH seems allergic to ZFS–after dumping heaven only knows how much money into btrfs, and finally realizing that btrfs is made of failure, RH now seems to want to build their own enterprise filesystem from scratch.

In any event, I know that what I’ve posted works for me, at least for the couple of days I’ve had it up. I’m not convinced it’s the best way to go, and of course I’d be interested in the experience of others.

Certainly, and that’s how I’m running SME right now.

Indeed, thanks for sharing ![]()

Running Proxmox here as well, 5 nethservers on top, bunch of clients, and the diskfiles on a truenas/freenas with zfs. This is the lazy admin setup

As I mentioned above, I’m running a Proxmox host with ZFS already (two of them, actually), and my SME Server installation is running on one of those hosts. I think ext4/xfs on a zvol is better than ext4/xfs on a bare hard drive, as you still get the data integrity that ZFS provides, but you still don’t get the full benefit of all the ZFS-y goodness. Here are a few benefits I see to running Neth with ZFS on bare metal, rather than virtualized:

- Volume expansion is trivial–

zpool add rpool mirror /dev/sdc /dev/sddadds another mirrored pair to the pool, the capacity is available immediately, no data needs to be moved/adjusted, nothing needs to be partitioned, etc. With Proxmox, I’d need to expand the ZFS pool (easy enough), then expand the virtual disk, then expand the ext4/xfs filesystem to use the newly-available space. - Snapshots are taken of the filesystem, not the block device. This means that any snapshots are guaranteed to be in a consistent state.

- Also, since snapshots are taken of the filesystem, you can navigate to the

.zfsdirectory and browse through any files that were present at that time, as they were at that time. - If you replicate those snapshots to another machine for backup, again, you’re replicating a filesystem, and can easily access or retrieve files from that backup. You can replicate snapshots easily in Proxmox (the

pve-zsyncscript works very nicely), but what you’re replicating there is a block device (a zvol). You’d need to mount that as an ext4 volume to be able to do anything with it. - I have no idea how well ZFS compression works on a zvol, but it works pretty well on files.

While Proxmox makes it trivially easy to install onto a ZFS pool, unfortunately it doesn’t include any GUI zfs management tools. So, if you need to replace disks or expand your pool, to the shell prompt you go. Of course, that’s true of Neth as well, and it’s nowhere near as easy to install Neth on ZFS, so…

btw, slight sidenote here, and sorry if this counts as necro, but - does anybody know why/how proxmox is able to offer zfs as a native option, and why they seem to be one of the very few linux projects offering zfs as a native/default/super easy option?

@mrmarkuz @Andy_Wismer @Lincee @Longhair @fausp could you answer Micah?

Shame on me I still didn’t test Proxmox intensively enough, still stuck with VMware.

They use ZFS for their virtualization.

With http://zfsonlinux.org/ it seems to be easy to include it in a distro.

It’s a special filesystem with snapshot function etc. used in NAS or virt distros. That may be the reason it’s not coming up as super easy option in much distros.

There are some distros and companies providing ZFS already:

I like to ask the NethServer-Community, should we, or the company, implement ZFS on NethServer?

I really like the idea (as should be obvious)–ZFS is a great filesystem/volume manager with some really useful features for data integrity/reliability. However, it would be a big break from upstream–RedHat apparently doesn’t much care for ZFS. So much so that they spent several years (and who knows how much money) on Btrfs, and once they finally realized it wasn’t going to get better, switched to developing their own filesystem instead. So, if we wanted to put Neth on a ZFS root filesystem, we’d be fighting upstream with every release.

I fully agree… Maybe we should just list the pros and cons and discuss it?

And dont forget, on the end of the day it has to be implemented under the GUI. Thats a lot, lot of work…

That would be ideal, no doubt, but is it essential? What tools does the GUI currently provide to manage software RAID? As far as I can see, none at all–what management is going to be done, has to be done at the shell. I don’t think this is a good thing, but we wouldn’t need to write a GUI ZFS volume manager to reach feature parity with the current software RAID environment. However, the FreeNAS folks have written one that we could probably look at, and NAS4Free has something as well.

Pro:

- Pooled storage - all your storage is in a single storage pool, and can be allocated as desired. There’s no need to partition disks.

- Guaranteed data integrity

- Near-instant snapshots

- Replication makes a great backup solution if your target is also on ZFS

- Compression–it’s fast (in many cases it improves performance by reducing disk I/O), and extra space is always good

- Online pool expansion is trivial, whether by replacing disks with larger ones, or by adding disks to the pool

- IIRC, dataset-level encryption is supported with current versions of ZFS on Linux

- RAID rebuild/resync (resilver) only writes used space, and is therefore much faster than traditional RAID

Con:

- No upstream support

- Resource-hungry–ZFS likes its RAM, largely due to caching. This isn’t as critical as some make it out to be, but you aren’t going to be very happy with ZFS on a machine with 1 GB of RAM.

- No RAID conversion–you can change mirrors to RAIDZ n, and you can’t convert an n - disk RAIDZ into an n+1 - disk RAIDZ–though there’s work in progress on the latter

There are certain features in ZFS that has definitely caught my attention and unfortunately, I’ve never had the time or resources to be able to play with it.

If ZFS is included, not sure if it is the best idea for it to be the default, may be best for it to be an option to select during installation as some may not have the hardware resources in order to use it.

It depends on the admin-knowledge. GUI is easy to handle, especially for beginners.

You can use the boot-parameter raid=1 to get a software-raid1 or you can do a manual partitioning under the GUI with the CentOS-Installer. Both are not realy ideal, in my opinion.

SME-Server Textinstaller offers a very handy way to get SoftwareRaid, I like that…

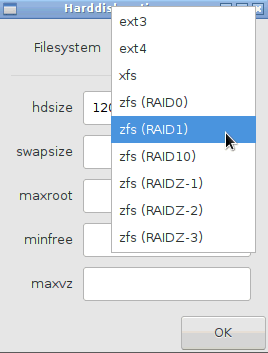

The Proxmox Installer offers ZFS:

This two Options - SoftwareRaid 1,5,… and ZFS (Raid0 - RaidZ-3) - would be great for the next NethServer Installer !

To be honest, I fell in love with the flexibility of using Proxmox as an extra layer. I will never ever install a server without a bare metal virtualization layer again…

IMO this approach defeats the need of having ZFS in NethServer, since it is already available by the host…