Hello, i had issue.

On my test machine with proxmox i started a nethsec vm with alpha 2 release.

I followed docs and restored image to zfs storage.

All running smooth.

Yesterday, a blackout shut down my server.

After Power restore, all the vm started, but nethsec vm wont boot anymore.

Bootloop, no messages, no errors, like a clean disk.

Any suggestion to make the vm boot again?

So weird.

I tried 2 times again.

Made fresh vm. Installed nethsec from img to clean disk first try, imported img and booted on second try.

Nethsec start well. Applied last updates.

On web console i push the shut down button. After Power the vm, bootloop. The message is: “no bootable device”

Followed documentation step by step. Only difference in my eviroment is zfs instead lvm.

Sorry for the late response, I completely missed this thread.

Did you apply the fixes or the whole image upgrade?

If you tried the image upgrade, it seems that data are not really written into the disk before the reboot.

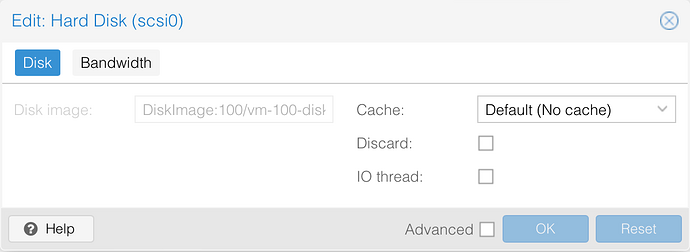

Assuming this is the case, you could try changing the disk cache type to directsync and see if the issue happens again. Take a look at Performance Tweaks - Proxmox VE

Let’s see if anyone else had a similar issue.

Maybe @francio87 ?

today I’ll do some tests with zfs and ceph, but for now I can say that I haven’t had any problems with local/lvm/btrfs

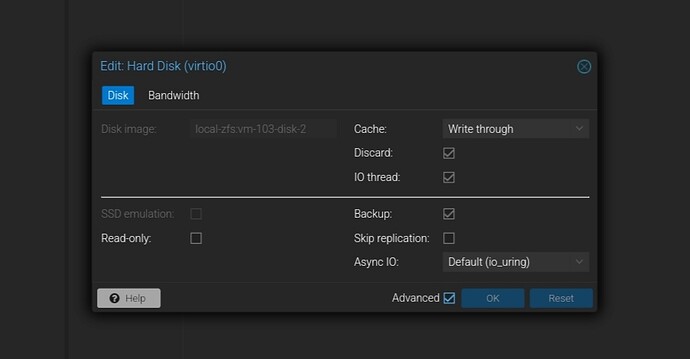

My settings are writethrough cache and discard+iothread+virtio single disk on vm disk. Is my default choiche for every vm.

I will try directsync and keep you informed

I analyzed the situation: damaged disk is recognized as 256kb size.

On proxmox i created as 4GB disk first time.

No way to access o reuse the disk. Gparted told me the same story. 256kb partition.

Made a new disk 3GB size, directsync cache, installed Nethsec to the disk.

Os started.

Made all necessary updates.

Shut down.

Os started again.

Shut down again.

Os started again.

Disk survived, Is still accessibile and boot.

The problem could really be related to disk cache type.

Update with Writethrough broke the disk.

Is the first time appended to me.

Is there any solution to keep writethrough cache on disk?

Thank you.

I really do not know enough Proxmox, but generally speaking I know that sometimes the writethrough cache has problems when dealing with disk raw writes.

We develop NethSecurity on KVM, but I never experienced such problem. My vms run with virtio disks.

I didn’t have much time yesterday and please remember that I’m not very familiar with zfs (I only have one test cluster with 2 disks with zfs), but from my tests, all with proxmox 8.1-4:

restore image ok (as per manual) with storage type:

directories (USB disk)

NFS (on nas)

lvm (local)

lvm-thin (local)

btrfs (local)

rbd-pve (ceph)

to test:

directories (local)

with problem:

zfs (local, mirror-0, thin provision)

and this is where things get weird

- restore of beta image (but tested also alpha and latest build): bootloop.

tested various config of controller/cache/AsyncIO without luck

and if i move the image to storage ceph also bootloop - restore image on ceph (another vm) and reassign to vm created for nethsecurity on storage zfs: all ok. update still on zfs all ok

- vm created with ceph and working. backup on local and restore on zfs: bootloop

so from a first test it seems to me that the problem is in the importdisk phase on zfs

@sarz4fun

if you have another storage configured could you try point 2 i.e. make an importdisk on another storage and then move it to zfs?

i hope to have some other time to test, it’s really strange…

Yes, usually is my default choiche.

This Is the only way i found to import the image on zfs:

root@pve-test:~# qm importdisk 103 nethsecurity-8-23.05.2-ns.0.0.1-beta1-x86-64-generic-squashfs-combined-efi.img local-zfs

Disk is bootable.

Used this image to launch ns-install to a blank disk.

Both disk, blank and imported, After upgrade result in bootloop.

mhh from what I understand you don’t need a second disk for installation if you use the image for proxmox

let’s say that following here:

Install on Proxmox (Installation — NethSecurity documentation)

after setting the boot order on the vm it should boot and then you can configure it

i think ns-install is more suited if you boot from usb and install on a disk on hardware. Or if you usb a usb pendrive in passtrough on a proxmox vm to install on a vm-disk, possible but probably overcomplicated

in the meantime I did some other tests with qm importdisk, but it just doesn’t start for me on zfs. ![]()

It is only for testing purpose, to check both cases, disk imported and flashed to blank disk if makes differences. On my tests, only cache writethrough, after flash update change disk size to 256kb, disk is no more accessible .

I think somebody find the problem on proxmox forum

Next tests of disk survival i will do when i got time:

-Different cache methods tests on lvm partition.

-Backup and restore with pbs / vma.gz on lvm/zfs.

-live migration between nodes and reboot after image flash.

I can not test live migration on ceph.

Test i’ve already done on beta1 image:

-test server hard reset after image flash / cache writethrough: KO

-imported image, upgrade, reboot/ cache writethrough: KO

-flashed image ns-install, upgrade, reboot / cache writethrough: KO

-imported image, upgrade, reboot / cache directsync: OK

-flashed image, upgrade, reboot / cache directsync: OK

-imported image, reboot without upgrade/ cache writethrough: OK

-imported image, live migration between nodes (shared storage, not ceph) , reboot without upgrade/ cache directsync: OK

Hello,

Did some tests:

Cache Writethrough and directsync:

Backup and restore worked correctly, both on local and pbs to zfs.

8 combinations of backup/restore Ok.

Apparently, with Writethrough cache, after last update package disk survived.

Live migration between nodes. Ok.

Update and stop vm. Ok.

Last tests remain on lvm partition. Is a bit difficultà for me make these tests now.

Hi Andrea

So basically which options for the it working?

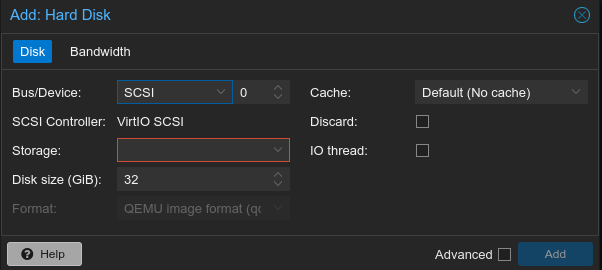

In Bus Device i leave SCSI as the nethsecurity documentation reccomended or i use virtio block?

And for cache?

Discard and IO Thread?

Thank you

I used VirtioScsi single

This Is my disk setting.

Works for me.

Past release, with Writethrough cache broke disk afer image upgrade. Now i do not have problems now about disk cache

But my problem is when i import the file image and attath to the vm, vm doesn’t boot

Yesterday morning, after 5 days the vm was off,

i started vm and bootloop. Ahrrg.

Let’s change again cache to nocache and hope that wont bootloop again.

What i did in these 5 days?

Proxmox apt-get update && apt-get upgrade

Host reboot

Hi @sarz4fun

So all your test you have done what is the conclusion?

At the end what is the configuration for the vm on proxmox with zfs does it work if i reboot/shutdown and upgrade nethsecurity?

It Is weird. Nethsec worked great for days with Writethrough cache. Now the bootloop again.

I Disabled cache again and continue testing with zfs.