I agree and I like the names proposed by @dnutan.

@filippo_carletti @davidep do you agree too?

woah, great! and a lot of test cases

i will try to test it but i’m very busy at work right now…

btw +1 for @dnutan proposal, but also enhanced backup it’s really nice

Ok for me.

My hint. Because there’s already a “config backup”.

The manual with rename proposal has been merged:

http://docs.nethserver.org/en/latest/backup.html

We are waiting for testing volunteers!

Started some testing until test case 3c, everything worked except of:

Test case 3a - green banner after restore but testfiles in directory root were not restored

Test case 3b - didn’t work:

rsync: change_dir "/mnt/backup/testserver/latest/" failed: No such file or directory (2)

rsync error: errors selecting input/output files, dirs (code 3) at flist.c(2118) [sender=3.1.2]

The dashboard had few issues, it should be fixed now.

I think that actually was correctly restored, but the UI didn’t report the right path.

I can’t reproduce it.

Could you please post the output of:

config show backup-data

/etc/e-smith/events/actions/mount-<yourfs> && ls /mnt/backup/<hostname>/

restore-data

Reported issues should be fixed.

Here you are…

[root@testserver ~]# config show backup-data

backup-data=configuration

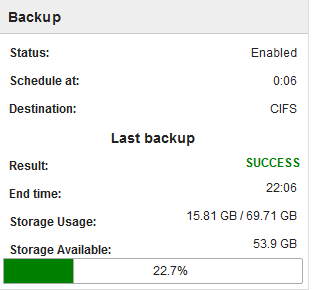

BackupTime=0:06

CleanupOlderThan=28D

FullDay=0

IncludeLogs=disabled

LogFile=/var/log/last-backup.log

Mount=/mnt/backup

NFSHost=

NFSShare=

Notify=error

NotifyFrom=

NotifyTo=root@localhost

Program=rsync

SMBHost=192.168.1.100

SMBLogin=

SMBPassword=

SMBShare=backuprsync

Type=incremental

USBLabel=

VFSType=cifs

VolSize=250

WebDAVLogin=

WebDAVPassword=

WebDAVUrl=

status=enabled

[root@testserver ~]# /etc/e-smith/events/actions/mount-cifs && ls /mnt/backup/testserver/

2018-07-06-010120 backup-config.tar.xz backup.marker

[root@testserver ~]# restore-data

Restore started at 2018-07-06 15:35:09

Event pre-restore-data: SUCCESS

/usr/bin/rsync -D --compress --numeric-ids --links --hard-links --one-file-system --itemize-changes --times --recursive --perms --owner --group --stats --human-readable /mnt/backup/testserver/latest/ /

rsync: change_dir "/mnt/backup/testserver/latest" failed: No such file or directory (2)

Number of files: 0

Number of created files: 0

Number of deleted files: 0

Number of regular files transferred: 0

Total file size: 0 bytes

Total transferred file size: 0 bytes

Literal data: 0 bytes

Matched data: 0 bytes

File list size: 0

File list generation time: 0.001 seconds

File list transfer time: 0.000 seconds

Total bytes sent: 20

Total bytes received: 12

sent 20 bytes received 12 bytes 64.00 bytes/sec

total size is 0 speedup is 0.00

rsync error: some files/attrs were not transferred (see previous errors) (code 23) at main.c(1178) [sender=3.1.2]

Action '/etc/e-smith/events/actions/restore-data-rsync': SUCCESS

Event post-restore-data: SUCCESS

Restore ended at 2018-07-06 15:35:12

Time elapsed: 0 hours, 0 minutes, 3 seconds

This is the real error: inside the directory the scripts should create a file named latest which is an hard link pointing to the latest snapshot.

Does you remote fs support hard links?

It’s a Nethserver samba share, so xfs under cifs but it seems not to support hard links. I tried some samba options but no success.

ln: failed to create symbolic link ‘/mnt/backup/testserver/latest’: Operation not supported

This is quite interesting! It’s probably some missing samba option…

I will test it right on Monday!

Samba doesn’t support symlinks (and it also has some problems with hardlinks) without proper insecure configuration.

I wasn’t even able to have working symlink with special config.

So I guess we should add a note to not use CIFS mounts on NethServer with rsync backup.

What do you think @mrmarkuz ?

Fully agree, so users are warned.

Done!

Anyone else want to join the testing? If you have concerns, I just have to say that we have this implementation on production since a couple of weeks! ![]()

I started to test today ! Here are some comments and some reporting :

Regarding the naming (“Main”, “secondary”, "single, “multiple”, …) considerations, why is this differentiation simply needed ? I find this differentiation really misleading, especially without an UI. For me a single backup should really be nothing more than a multiple backup with only one configuration. Keeping the “old” way of doing things will only introduce confusion and doesn’t seem to have any advantage except maybe some backward compatibility (?)

Regarding the documentation : the “Single backup” configuration states that the BackupTime key should be expressed in the hh:mm form, while the “Multiple backup” entry should be in the cron syntax. Again, this is misleading.

Entry CleanupOlderThan is (should !) useless when used with the rsync backend, it should be specified in the doc. I can open a PR if someone confirms that.

duc index : I’m still not convinced about this  I find this tool being really ressource hungry and not optimised (the index must be entirely rebuilt each time a backup is launched, imagine indexing a multi-terra file server being backuped each hour !).

I find this tool being really ressource hungry and not optimised (the index must be entirely rebuilt each time a backup is launched, imagine indexing a multi-terra file server being backuped each hour !).

For the rsync backend the duc index is even not useful when restoring since the file list can be easily built by listing the files in the “latest” backup folder.

For the restic backend a snapshot listing can also be done using restic ls -l latest

Conclusion : for me this index should only be created when needed if needed at all.

I tried to configure my system using this configuration :

Primary backup disabled :

# config show backup-data

backup-data=configuration

BackupTime=23:00

CleanupOlderThan=14D

FullDay=1

IncludeLogs=disabled

LogFile=/var/log/last-backup.log

Mount=/mnt/backup

NFSHost=

NFSShare=

Notify=always

NotifyFrom=

NotifyTo=root@localhost

Program=rsync

SMBHost=

SMBLogin=

SMBPassword=

SMBShare=

Type=incremental

USBLabel=external

VFSType=usb

VolSize=250

WebDAVLogin=

WebDAVPassword=

WebDAVUrl=

status=disabled

Secondary backup configured to run every hour and to backup to the usb disk labelled “external” using rsync backend :

# db backups show

t1=rsync

BackupTime=0 * * * *

CleanupOlderThan=default

Notify=always

NotifyFrom=

NotifyTo=root@localhost

USBLabel=external

VFSType=usb

status=enabled

Then notified the system about this new backup scheme :

signal-event nethserver-backup-data-save t1

I launched the backup manually and it successfully found my previous backups an continued to incrementally backup  nice !

nice !

I’ll report later if needed.

THANKS for the good work !

Update : looks like if the main backup is disabled, the cron job for the secondary backup isn’t created, therefore the backup doesn’t start.

We can’t remove it, we must preserve backward compatibility with thousands servers.

But we can hide the single backup when we will implement a new UI using cockpit.

I agree, but this is the most flexible implementation. We will hide also this complexity.

Already removed in this commit: https://github.com/NethServer/nethserver-rsync/commit/2da9d79eff92f5dc0ee92a4e11b6b85a74487a8e

(Even if upstream is implementing it, but there are some bugs).

I forgot to remove it from the README, just done.

No one is. It’s bad hack for a problem without a simple solution.

No is not: the index is incremental, but still it’s way too slow!

This is true from the command line, but not from the UI which currently needs to create a tree.

This is an error inside the cron.d template, already fixed. Thank you for reporting!

question time…

Restic seems most interesting engine. Is there any plan to integrate a REST server into NethServer?

Not officially for now, but you can find the instructions here: https://github.com/NethServer/nethserver-restic/blob/master/README.rst

Hi @giacomo ![]()

Okay. Still not sure to see the backward compatibily issue (looks easier to migrate old settings to fit the new backup system) but I trust you.

Don’t forget the documentation.

Remember we already share some thoughts about this :

We could also use some Lucene on the fly indexing if we really want the UI be super responsive instead of building a file list when loading the UI page. I’m not sure it’s worth the trouble : someone wanting to restore some files will easily accept to wait some seconds when the list of files is being built.

My pleasure !